VerMind-V

A multimodal extension of VerMind that brings vision understanding capabilities. Process images and text together for rich multimodal reasoning.

VerMind 的多模态扩展,为强大的语言模型主干带来视觉理解能力。同时处理图像和文本,实现丰富的多模态推理。

🎯 Interactive Web Demo

🎯 交互式 Web 演示

Experience vision-language understanding in your browser

在浏览器中体验视觉语言理解

VLM Mode - Vision + Language VLM 模式 - 视觉+语言

python3 scripts/web_demo.py --model_path /path/to/vermind-v --mode vlm

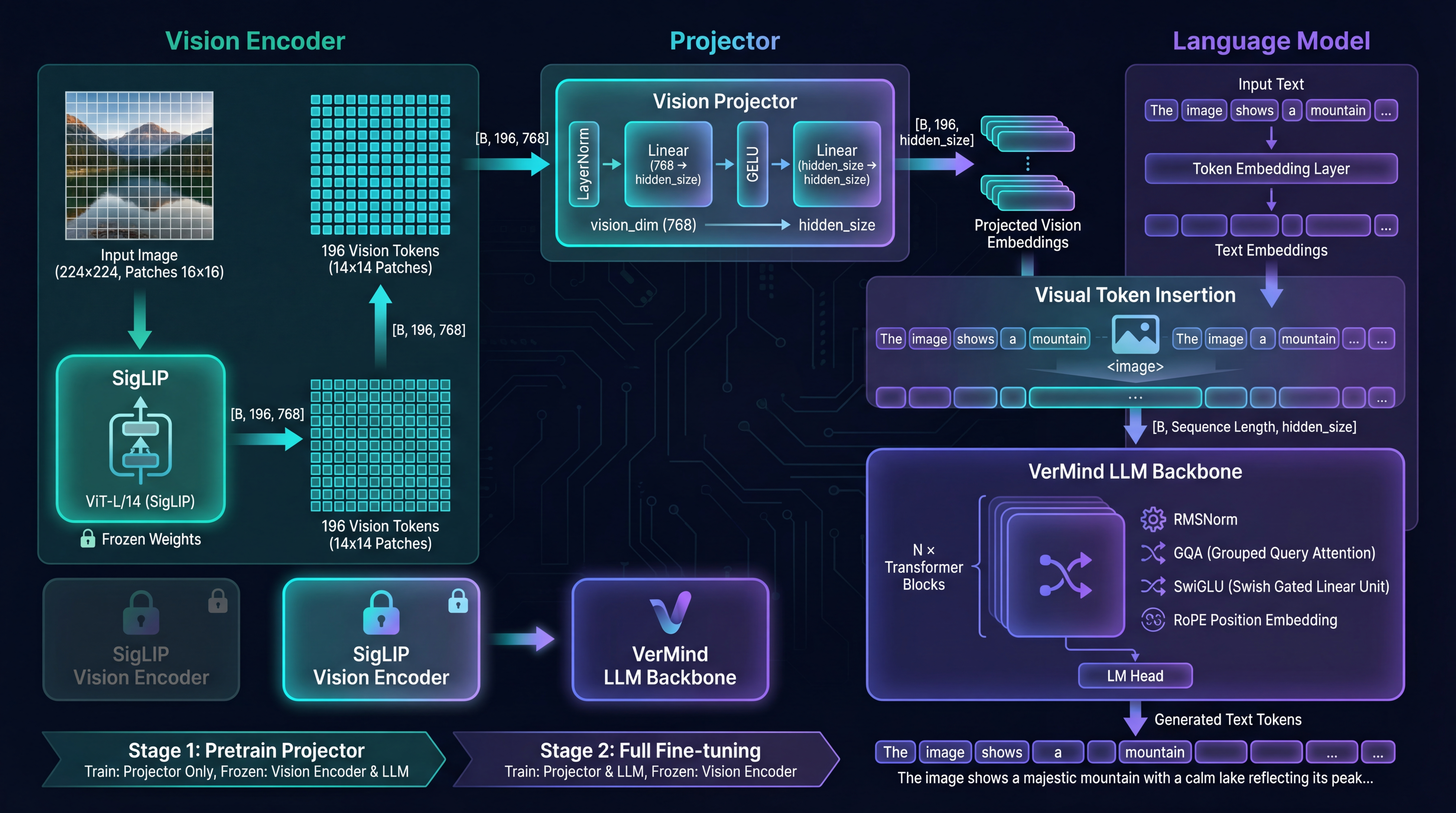

Architecture

架构

Three-component architecture for seamless multimodal integration

三组件架构实现无缝多模态集成

Features

功能特性

Built for multimodal understanding and generation

为多模态理解和生成而构建

Vision Encoder Integration

视觉编码器集成

Seamlessly integrates vision encoders with the language model for unified processing.

将视觉编码器与语言模型无缝集成,实现统一处理。

Image Captioning

图像描述

Generate detailed, accurate descriptions of images with contextual understanding.

结合上下文理解生成详细、准确的图像描述。

Visual Question Answering

视觉问答

Answer questions about image content with precise visual grounding.

以精确的视觉基础回答关于图像内容的问题。

Visual Reasoning

视觉推理

Perform complex reasoning requiring understanding of both visual and textual information.

执行需要理解视觉和文本信息的复杂推理。

Efficient Training

高效训练

Two-stage training: projector pre-training followed by visual instruction tuning.

两阶段训练:投影器预训练后进行视觉指令微调。

vLLM Compatible

vLLM 兼容

Deploy with vLLM for high-throughput multimodal inference.

使用 vLLM 部署,实现高吞吐量多模态推理。

Training Pipeline

训练流程

Two-stage training with unified script

使用统一脚本进行两阶段训练

Vision-Language Pre-training

视觉-语言预训练

bash examples/pretrain_vlm.shFreeze LLM, train only vision projector on image-text pairs

冻结 LLM,仅在图文对上训练视觉投影器

Visual Instruction Tuning

视觉指令微调

bash examples/vlm_sft.shFull model training on visual instruction-following data

在视觉指令遵循数据上进行全模型训练

Unified Training Script

统一训练脚本

python train/train_vlm.py \

--stage {pretrain|sft} \

--from_weight ./output/sft/full_sft_768 \

--data_path ./dataset/vlm_data.parquet \

--vision_encoder_path ./siglip-base-patch16-224